We Don’t Know What Our Computers Are Doing

Why Trust Them for Elections?

Computers are like a black box — we don’t really know what they’re up to inside. A series of recently discovered vulnerabilities only drives home the point, and further calls into question the US’s reliance on electronic voting systems.

Most of us really don’t know what our computers are doing. We just know that we use them for working, communicating, shopping, banking, and having fun. But the reality is that IT experts don’t know either.

This was demonstrated by several recent stories of security failures and hardware flaws of an extraordinary nature. It’s a problem for cybersecurity in general, but in particular, and most disconcertingly, for our already at-risk election infrastructure.

Most people have no idea where or by whom their computers were made. And they don’t care. They just want them to work, and they trust that the experts know what’s going on inside and how to keep them safe.

But what if that weren’t the case?

Hard(ware) Problems

.

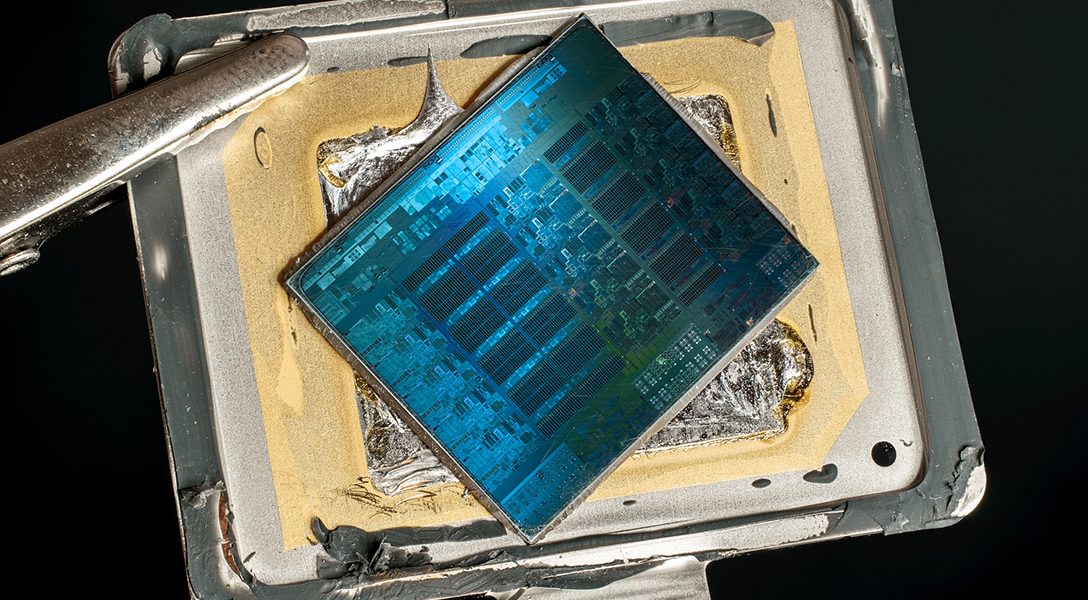

Within just the past year, several major vulnerabilities became public that cast doubt on whether we’re really in control of our computers. The first was the revelation that an operating system has been secretly running in the background of millions of computers.

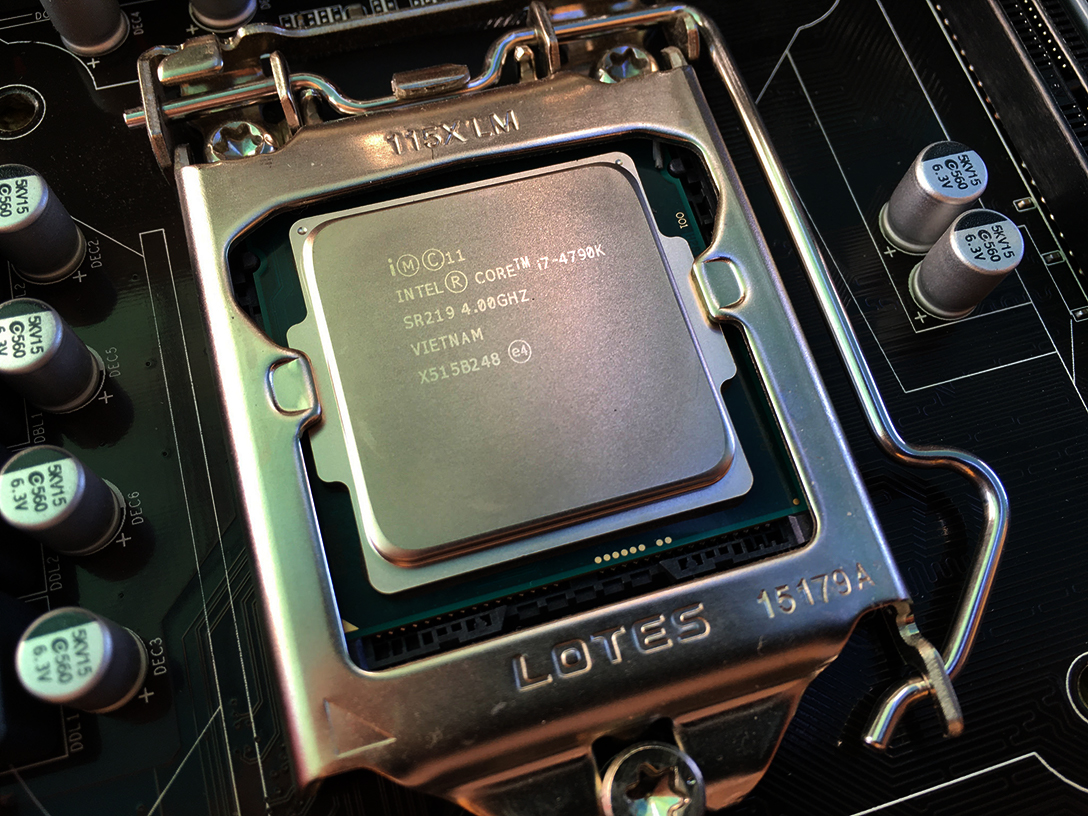

For decades two software brands have long dominated the market share for desktop/laptop operating systems — Windows and Mac. But it turns out that if your computer has an Intel processor — and there’s a good chance it does — you’re actually more than likely running MINIX at the most fundamental level.

Never heard of it? You’re not alone. Didn’t realize you were running it? Don’t worry — neither did its creator.

This revelation of a secret operating system running in the background of almost every Intel processor since 2008 — and the general problem of “black box” computer hardware that we all take for granted — has made waves recently in the cybersecurity world.

Add to that several nightmare hardware processor bugs that appeared earlier this year — known as Spectre and Meltdown — that potentially allow a user to steal information and escalate their privileges on a system. IT experts are still dealing with the problem, and finding similar bugs.

It’s easy to see why cybersecurity professionals have been worrying. But so should election officials.

These disclosures come at an inopportune time. Tensions are high over allegations of Russian hacking into Democratic Party computers and several state election systems. At the same time, recent revelations of homegrown security gaps in US voting equipment have raised new fears of possible cyberattacks. Also troubling are recent leaks of the US intelligence community’s own hacking toolkits and capabilities.

Related: Voting Machine Company Admits Installing Vulnerable Remote-Access Software

Computers have always been susceptible to hacking, but these recent hardware risks are especially worrisome — because IT professionals aren’t sure what the hardware is doing, when and where the next vulnerability will appear, or how to fix it.

Meet MINIX — the ‘Most Widely Used Computer Operating System in the World’

.

Last fall, Andrew Tanenbaum, the creator of the MINIX operating system, wrote an open letter to Intel, the CPU manufacturing giant. Tanenbaum originally designed MINIX as a teaching tool. He expressed surprise and thanks upon learning that his creation was being used inside Intel’s chips — making it probably the most widely used computer operating system in the world, even though no one had realized it. But he was miffed that Intel didn’t tell him. He found out through press reports.

And in a follow-up note he sounded an alarm :

Many people (including me) don’t like the idea of an all-powerful management engine in there at all (since it is a possible security hole and a dangerous idea in the first place), but that is Intel’s business decision and a separate issue from the code it runs. … I certainly hope Intel did thorough security hardening and testing before deploying the chip, since apparently an older version of MINIX was used. Older versions were primarily for education and newer ones were for high availability. Military-grade security was never a goal.

When we think of an operating system like Windows or Mac, most of us think about it being installed on our hard drives. Occasionally those hard drives go bad, or get infected with viruses. Then we have to replace them and reinstall the operating system from scratch.

But what Tanenbaum is referring to is something very different — and potentially very dangerous.

This is an operating system installed on a chipset — known as the Intel ME-11 management engine — on the motherboard of the computer that manages the central processor — the brains of the computer. And you probably have no way of knowing what it’s doing. Or getting to it, turning it off, or removing it.

So just what can this hidden management engine do? According to Intel:

Built into many Intel® Chipset–based platforms is a small, low-power computer subsystem called the Intel® Management Engine (Intel® ME). The Intel® ME performs various tasks while the system is in sleep, during the start process, and when your system is running. This subsystem must function correctly to get the most performance and capability from your PC. This utility checks that the Intel® ME subsystem is running and communicating properly up to the operating system.

Sounds benign. But according to security researchers, it can also potentially read any part of the computer memory and then transmit that information over a network. And it could allow for remote access.

And because it is at a lower level of operation than your regular operating system, you would never know about it.

“Putting in chips like the ME is a terrible idea because it opens a potential door to hackers of all kinds, including state actors,” Tanenbaum told WhoWhatWhy. “If it can be exploited, it will be exploited.”

Back to the (Open) Source

.

So just what is MINIX? You may not have heard of it, but there’s a good chance you’ve heard of Android — the dominant mobile operating system, currently on billions of phones worldwide. But Android happens to run on top of Linux, which is a powerful open-source operating system (meaning the programming code — the blueprint of the software — is freely available and can be modified by users). Linux powers a good deal of the internet and most supercomputers. And Linux was based on Andrew Tanenbaum’s MINIX, which itself was based on the granddaddy of them all, UNIX, created by engineers Dennis Ritchie and Ken Thompson at Bell Laboratories in the 70s. Apple’s OS X was also based on a UNIX derivation.

And why MINIX? Because Intel could. In 2000, MINIX was licensed under the Berkeley Software Distribution (BSD) license — which is a software license that allows users to use and modify the source code. The license is very permissive in that users who modify the source code are not required to make their modifications open-source (as opposed to Linux, which is licensed under the General Public License (GPL) that requires modifications and improvements to be openly shared with the public — a so-called “copyleft” license).

This makes the BSD license attractive to companies — such as Apple and Google, who have made extensive use of permissively licensed software — who don’t want their modified source code to be shared with the public. As Tanenbaum noted in regard to Intel, “I have run across this before, when companies have told me that they hate the GPL because they are not keen on spending a lot of time, energy, and money modifying some piece of code, only to be required to give it to their competitors for free.”

So Intel is running this powerful operating system on this chip — and the public can’t see the modified source code, can’t know what it is doing, and can’t control it.

But let’s not heap all the scorn on Intel. Advanced Micro Devices (AMD), Intel’s chief competitor, has a similar management engine for its CPUs. Only theirs is on the CPU chip itself. And like Intel’s MINIX-powered microcontroller, no one knows for sure exactly what it does or is capable of.

Government Backdoor?

.

Tanenbaum had one final follow-up note at the end of his open letter to Intel:

If I had suspected they might be building a spy engine, I certainly wouldn’t have cooperated, even though all they wanted was reducing the memory footprint (= chip area for them). I think creating George Orwell’s 1984 is an extremely bad idea, even if Orwell was off by about 30 years. People should have complete control over their own computers, not Intel and not the government. In the US the Fourth Amendment makes it very clear that the government is forbidden from searching anyone’s property without a search warrant. Many other countries have privacy laws that are in the same spirit. Putting a possible spy in every computer is a terrible development.

But is it really possible this platform could be used as backdoor by government agencies, foreign or domestic?

“Of course. It is not just possible. It is happening. It is not an accident that these backdoors are there. Such were proposed with Clipper Chip decades ago, and supported by mainstream computer scientists,” Dr. Rebecca Mercuri, a computer scientist and expert in cybersecurity and computer forensics, told WhoWhatWhy. “Some of the entities and code have changed but otherwise nothing is different now.”

What she is referring to as “Clipper Chip” was a literal backdoor built by the NSA in the early 90s. It was a small microchip that was proposed as a security device, encrypting voice and data. The only problem was that hardwired decryption keys were built into each chip, allowing law enforcement to decrypt all data that was “secured” by the chip.

The Clipper Chip proposal faced harsh criticism from security experts, and ultimately the industry rejected it. We can see this same battle being fought more recently in the FBI’s demand that Apple provide a way for a terrorism suspect’s phone to be unlocked.

On the other hand, Edward Snowden’s leaks revealed that, in fact, Silicon Valley and the intelligence community often work hand-in-hand behind the scenes.

Meet Spectre and Meltdown — AKA Cybersecurity Hell

.

On the heels of the Intel/MINIX news, two major bugs surfaced earlier this year, known as Spectre and Meltdown.

Whereas most computer bugs can be traced back to a software problem — a flaw in the programming code — these two were embedded in the CPU hardware. And they were much harder to fix. You can always go back and fix bad program code, and then release an update or a patch to consumers. But if the problem is in the design of the hardware (or its built-in code), that’s a different animal. Most of the bugs have in fact been mitigated with software fixes to stave off the flaws inherent in the CPUs — with the cost of significant decrease in CPU speed. And problems remain.

The two bugs could allow users to access unauthorized memory — giving them the ability to steal passwords and crypto-keys, for example — and to escalate their privileges on a system.

You can read the fine tech details of these two major bugs here and here, but essentially they have to do with how modern CPUs operate and obtain their incredible speed. For example, CPUs rely on what is called in the tech world “speculative execution.” What this means is that when your CPU is crunching data, it will predict what bits of information will arrive next down the pipeline before they actually arrive. If the CPU makes the right prediction, fantastic — it’s saved some time and increased processing speed. If not, then it simply “unwinds” the data and processes with the correct info.

But unfortunately that architectural process leaves the computer susceptible to attack.

And not just desktops and laptops are at risk, but nearly every device running a modern computer chip — including phones and tablets. The exploits are so widespread that it attracted the attention of Congress, which wanted to know why the major IT companies had not disclosed the bugs earlier.

The IT world has already seen several derivations of these attacks in the past months, and more will likely crop up. Just this week additional attack vectors — collectively referred to as Foreshadow — were discovered in Intel chips related to the Spectre and Meltdown weaknesses.

Computers and Elections?

.

Up against hardware bugs, secret operating systems, and backdoors — not to mention your run-of-the-mill viruses, trojans, worms, spear phishing, social engineering, and Distributed Denial of Service (DDoS) attacks — computers are surely vulnerable. But how about the electronic voting machines that most Americans use to cast their votes?

Unfortunately, US voting machines have been repeatedly proven to be susceptible to hacking. And these voting machines are owned by private companies, who use closed-source programs and hardware, inaccessible to the public and cybersecurity experts for review.

Related: Hackers Eviscerate Election Tech Security… Who’s Surprised?

“It is well-known that the slot machines used in casinos across the USA are far more secure, better tested, and [better] regulated than any of our voting machines,” says Mercuri. “We do know how to perform secure 100% counts correctly, but those in charge consistently choose not to effect the necessary controls. If we voted on slot machines, I might have better confidence than in the junk and fraud being foisted on the American public.”

So should US election officials ditch electronic machines altogether and go back to pencil, paper, and hand counting? Although electronic voting in one form or another is prevalent in the US, it has been largely rejected by Western European democracies.

Count Tanenbaum among those who want to move to paper ballots. In his view, hardware vulnerability meets government apathy on Election Day.

“Most state and local election authorities have no more knowledge of election security than a cockroach and as little interest,” he told WhoWhatWhy.

Related front page panorama photo credit: Adapted from Lenovo by Maurizio Pesce / Flickr (CC BY 2.0)