A provocative essay by a noted civil rights attorney on the dangers of censorship, and why he believes that “the only defense against a bad guy’s hate speech is a good guy’s counter-speech.” OPINION

|

Listen To This Story

|

Which do you agree with: America has too much online hate speech? Or, America has too much online free speech? Both complaints can apply to the same words.

The difference depends on no more than whether you are the speaker or the listener. What comes from my mouth is free speech that deserves legal protection. You protest that what enters your ears is hate speech that must be prohibited.

Hate speech is a byproduct of free speech. Lose one and you lose the other.

Can a balance be struck between too much hate and too little freedom? Or is any restriction on speech an evil to be avoided at all costs? The latter has been the American way. The US seems to have lost its way on this fundamental concept when it comes to online speech.

Europe, especially Germany, is tilting the speech teeter-totter heavily toward restricting some offensive speech. The United States has long been an outlier, permitting virtually unrestricted speech. But free-speech advocacy in the US is moving from its strongest protector — the courts — and shifting to its weakest protectors, politicians and corporations.

A hundred years of often arcane First Amendment jurisprudence — refined to protect the sanctity of even the most offensive free speech — has been isolated from judicial scrutiny and privatized to the benevolent care of the world’s largest corporations. Nothing good can come from this.

Neither this new American model nor the European model offer much comfort to those of us old fashioned “sticks-and-stones-may-break-my-bones-but-words-will-never-hurt-me” free-speech fundamentalists.

Lawmakers are focusing on how corporations such as Facebook and Twitter supervise what gets posted online. The wisdom of handing absolute control of the online free-speech spigot to profit-making corporations has been ignored. But I’m not in panic mode just yet. Free speech, even offensive free speech, is difficult to shut up.

A fundamental, and until now well-accepted, concept of liberty in the United States is that free speech, even offensive free speech, deserves and often requires protection. Will we, should we, allow the public forum on which most speech takes place these days — the internet — to be sanitized, allowing nothing offensive, vulgar, or possibly hurtful?

I’d rather see Congress address this question before getting into the nitty gritty of how much bare skin and how many swastikas Facebook permits.

Hate Speech Is Today’s Pornography

.

Way back in the days when pornography was viewed as a tempting tool of the devil and self-righteous prosecutors and zealous local judges struggled to protect society from dirty pictures, a good part of my civil rights legal practice involved defending the sources of such illicit pleasure.

Once a month or so I’d join a parade, led by two flag-carrying court officers, a black-robed judge, an assistant district attorney, clerks, and a dozen or so jurors from Boston’s Suffolk County Courthouse down the sidewalk to the nearest movie theater.

Film poster for Deep Throat, a noted pornographic film, 1972. Photo credit: Bryanston Pictures / Wikimedia

We’d all sit in an otherwise empty theater and watch something like Deep Throat College Cheerleaders Do the Team so the jurors could decide whether an average person applying contemporary community standards would find that the movie, taken as a whole, appealed to prurient interest, whether it depicted sexual conduct in a patently offensive way (I always wondered just who held that patent) and whether the movie lacked serious literary, artistic, political, or scientific value. That was — and still more or less is — the Supreme Court’s standard for obscenity.

It was a near-impossible task for run-of-the-mill jurors. Or judges. The most frank admission about the impossibility of separating protected free speech from unprotected obscene speech came from Supreme Court Justice Potter Stewart in Jacobellis v. Ohio (1964), with his confession that while he couldn’t define obscenity, “I know it when I see it.”

My standard was to peek at the judge and see whether his hands covered his eyes during the good scenes.

These days a 12-year-old with a TV remote or a cell phone can get his fill of the same porn that vice squads, district attorneys, and campaigning judges put so much effort into eradicating. Dirty speech turned out to be impossible to regulate. The good folks battling to root out bad speech pretty much threw in the towel. The Department of Justice doesn’t even report statistics on ordinary pornography crimes anymore. Ordinary porn. The Stormy Daniels kind. With grown ups. Only child-related pornography is still prosecuted.

That history comforts me because hate speech is today’s pornography.

It isn’t holy rollers and sexual prudes decrying the evils of dirty speech who want to protect tender ears from harmful words and images these days. Now it is well-meaning opponents of fascism and bigotry who want to protect society from hatred and discrimination. Some of their wrath is directed at public rallies by right-wing organizations, such as the Charlottesville demonstrations. Those situations involve classic First Amendment questions about how government balances freedom of speech with preventing violent opposition.

But public streets and parks aren’t where most speech is found these days. The internet is the new public square for advocates of just about any conceivable concept. And in America, at least until recently, the government avoided any role in controlling what gets said online. Unlike in the days of pornography prosecutions, nobody is arrested for what they post online.

Even though Google, Facebook, and Twitter are larger than many governments — Facebook’s 2017 revenue of more than $40 billion is more than the budgets of 34 states — they are still private corporations.

As private entities, the First Amendment does not apply to them. The First Amendment prohibits only the government, not private individuals and not private corporations, from interfering with free speech. The government can’t jail you for saying Trump is a jerk yet. But your boss, assuming you work for a private employer, can fire you for saying that. And since the internet is a creation of private enterprise, from Comcast to Google, there is no constitutionally protected right to free speech on the internet.

So what is being done about hate speech online?

Photo credit: Khalid Albaih / Flickr (CC BY 2.0)

America and Europe take two different paths to keep the worst of hate speech off the net. America’s initial reaction to regulating internet content, back in the days of dial-up modems when America Online was most people’s path to the web, was to throw its governmental hands in the air and shout, “OK, anything goes.” Internet service providers, Congress declared, only provided the means for communications but were not responsible for the content of those communications. This policy is enshrined in Section 230 of the 1996 Communications Decency Act: “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”

In other words, what a person posts online is that person’s speech, not the speech of Comcast or Facebook. The corporations are not responsible for mediating their customers’ posts. Instead, everybody with a way to post online — these days, almost everybody — became his or her own publisher. A.J. Liebling’s notion that “Freedom of the press is guaranteed only to those who own one” (the New Yorker, May 14, 1960, p. 109), was turned on its head. Now we all have our own personal presses, our own means of spreading our scribblings across the globe.

This was viewed as a great victory for free-speech advocates. Anybody could say whatever they wanted online. The future of free speech on the internet was unlimited, folks thought. “The Internet is a revolution in communications that will change the world significantly. The Internet opens a whole new way to communicate with your friends and find and share information of all types. Microsoft is betting that the Internet will continue to grow in popularity until it is as mainstream as the telephone is today,” Bill Gates said in 1996. (Time, September 16, 1996).

As it turned out, the internet grew into not quite this electronic Wild West. While visionaries may have envisioned an electronic future in which every user owned his own printing press, nobody appreciated that the delivery trucks and roadways standing between individual users and individual readers would be owned by what grew into the world’s largest corporations.

A new American model for the internet developed in which people could post whatever they wanted, without much in the way of restrictions (more on that later) and readers could search for whatever they wanted on the internet, but the internet service providers who stood between poster and postee — the Comcasts, Verizons, Googles, and Facebooks — had complete legal freedom to approve or disapprove any communication at any time for any reason. Without restriction. Without accountability for their decisions.

Until recently that has been the American model. Even as online hate speech became seen as an increasing problem, Congress remained as hogtied on what to do with it as it is on anything that involves more heavy lifting than naming a new post office in Possum Hollow, Arkansas. Because of congressional inability to do anything of any substance, Europe — Germany in particular — has taken the lead in resisting online hate speech.

Germany

.

Germany has long prohibited overt support of its Nazi past. Section 130 of the German Penal Code prohibits denial of or playing down the genocide committed under the National Socialist regime (§ 130.3), including through dissemination of publications (§ 130.4).

This includes public denial or gross trivialization of international crimes, especially genocide or the Holocaust. Swastikas cannot be publicly displayed. Hitler’s Mein Kampf was long permitted only in an unillustrated, 2,000-page scholarly version with 3,000 annotations by historians.

These restrictions applied broadly, including online. The past 12 months saw an expansion of Germany’s already distinctly un-American legal restrictions on online hate speech. A new Net Enforcement Law (NetzDG), which went into effect January 1, 2018, sets out 20 topics defining an online comment as “clearly illegal,” such as incitement to hatred or showing the swastika.

Photo credit: Adapted by WhoWhatWhy from mapswire / Wikimedia (CC BY-SA 4.0) and Mike MacKenzie / Flickr (CC BY 2.0).

Once posts are flagged by users, a social-media firm has 24 hours — extended to a week in complex cases — to remove those that contravene the statutory rules or face up to a €50 million ($60 million) fine for systematic violations.

NetzDG applies to all for-profit social media platforms with at least two million registered users in Germany. Practically, that means only Facebook, Google, Twitter, and Change.org, an online platform for sharing petitions with more than five million German users.

This new German law is in stark contrast to the American model. Germany places the legal burden for removing online hate speech on social media corporations. America places virtually no legal restrictions on internet content, but relies entirely on the good faith of mega-corporations to do the right thing. Whatever the corporations decide the right thing happens to be.

Let’s see how the two systems have worked out in the past year, since Germany’s NetzDG went into effect, while Mark Zuckerberg and Sheryl Sandberg have been compelled to perp walk before congressional committees to explain their voluntary efforts at cleaning up — or, as free speechers would say, sanitizing — Facebook.

German internet providers are not compelled to proactively screen content. The law only compels them to react to specific complaints. The government plays no role in reviewing specific actions.

Providers have to report every six months on the number of complaints they receive and what actions they took. They face huge potential fines for failing to remove hate speech — and no penalties for mistakenly removing posts that do not cross the line into illegal hate. It isn’t difficult to guess where the incentives lie for internet providers.

Even with those incentives, the numbers are not impressive. In the first six months after the law went into effect, Facebook received 1,704 complaints and removed 362 posts. Google and YouTube received 241,827 complaints and removed 58,297 of them. Twitter received 264,818 complaints about posts and removed 28,645 of them. (Google, YouTube, and Twitter made it easier to lodge a complaint than Facebook did, which probably explains the numbers of complaints each received.) These sound like large numbers until they are compared with a 45-minute period during the Germany-Brazil World Cup football match in 2014 when there were 35.6 million tweets posted in Germany.

No fines were imposed on internet service providers in Germany in the first six months of the new law.

Photo credit: OpenClipart-Vectors / Pixabay

For all the frenzied opposition to Germany’s NetzDG law by civil libertarians, it appears not to have had any major impact, either on civil rights — or on eliminating online hate speech. Compared with the billions of posts on the internet, the number of posts removed under the law is miniscule.

The law does not prohibit reposting deleted posts, so there is no way of knowing how much, if any, of the targeted hate speech was actually removed from circulation.

The main effect of the German law has been to provide internet providers with legal cover, with a safe harbor. They can be liable for allowing hate speech to be posted on their services only if they are notified of the speech and they fail to act. And fines are available only for systemic failures to act. It is unlikely that Google, Facebook, YouTube, or Twitter will ever be fined under this law.

Unintended Consequences of a Law

.

A glimpse of what could be down the internet road in America comes from a law President Trump signed last March, intended to prohibit online sexual exploitation, based on a House bill known as FOSTA, the Fight Online Sex Trafficking Act, and a Senate bill, SESTA, the Stop Enabling Sex Traffickers Act. These bills were among the few bipartisan acts of Congress last year.

In briefest summary, SESTA/FOSTA made it a crime — with a penalty of 10 years in prison — for “an interactive computer service” to operate “with the intent to promote or facilitate the prostitution of another person.” The law created an exception to the broad protection provided to internet providers by Section 230. For the first time, internet services, from Backpage to Facebook, could be criminally liable for the content of what their customers posted.

Unlike the German law, which requires internet service providers to act only after they receive a specific complaint about a specific post, the American law forces service providers to police themselves.

What happened next is a harbinger of how even well-meaning restrictions on online speech are likely to cause unintended consequences.

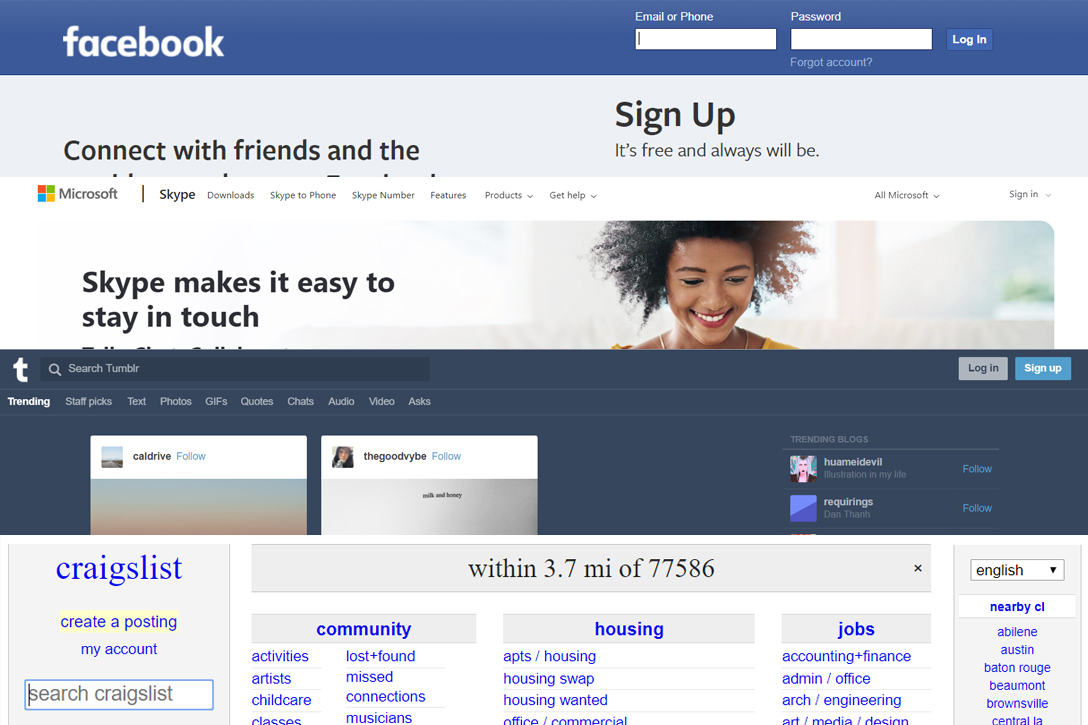

Rather than attempt to review all content for SESTA/FOSTA violations, most web services just dropped their personal ad sections. Two days after the law went into effect, Craigslist eliminated personal ads altogether and posted an apology with its announcement: “To the millions of spouses, partners, and couples who met through Craigslist, we wish you every Happiness!”

Facebook changed its community standards to ban even requests to get together that included such innocuous statements as “I’m looking for a good time tonight.” Within a month Microsoft scoured its web services, including Skype, of adult images and messages and outright banned “offensive language” on any of its platforms or services, from Xbox to email to cloud storage. “Offensive language” is whatever Microsoft deems offensive.

Photo credit: Facebook.com, Microsoft.com, Tumblr.com, and Craigslist.com

Similarly, just last month Tumblr announced a total ban on all “adult content.” Even though Tumblr had previously vigorously supported its right to display nudity and “adult” themes, its CEO Jeff D’Onofrio announced the change of policy with the comment, “There are [sic] no shortage of sites on the internet that feature adult content.”

This overreaction to the government’s attempt to battle sexual exploitation on the internet is a frightening harbinger of the inevitable next step: similar efforts to eliminate online hate speech. Can there be much doubt that attempts at legally regulating online hate speech will soon be in the works? It’s easy to picture. Just copy Germany’s law.

Fortunately for Facebook, Google, and the like, and for those of us of the traditionalist free speech civil rights persuasion, such laws are unlikely to stand up in court. While Google is free to post or not post whatever pleases its corporate mind, any attempt by the government to criminalize the posting of hate speech is likely to run into steep First Amendment opposition.

The legal dividing line between online speech that can be prohibited by law — can be made criminal — and speech that the government cannot prohibit is whether the speech constitutes “a true threat” of an intent to commit an act of unlawful violence to a particular individual or group of individuals as specified in Virginia v. Black (2003).

That sort of hate speech has to be specific as to its target and the imminence of harm, a difficult standard to meet. For example, in Elonis v. United States (2015), the Supreme Court reversed the conviction of a man who posted rap lyrics on Facebook telling his wife to “Fold up your [protection-from-abuse order] and put it in your pocket. Is it thick enough to stop a bullet?”

Run-of-the-mill hate speech of the “kill the Jews,” “deport the illegals,” “protect our bathrooms” varieties won’t meet that standard.

I feel comfortable relying on courts and judges to enforce the confusing and arcane First Amendment free-speech standards that have evolved over the centuries. The very difficulty of applying these standards will stop any wholesale legal assault by the government on online free speech. Just as with the futile war on pornography, a similar legal war on hate speech is likely to get mired down in arcane definitions and applications in the courts when faced with a huge body of First Amendment jurisprudence.

What concerns me more is the present model of privatizing the job of making the internet civil and polite. Do we really want to rely on Mark Zuckerberg, swell guy that he appears to be in film and fame, to protect the free-speech rights of people and organizations that are far from the political and cultural mainstream of his customers?

Most of the body of law that has evolved around the First Amendment involves speech that is offensive to the mainstream. I once represented a religious fundamentalist who wanted to post banners on Boston’s subways declaring that Santa was not in the Bible and did not exist. In December.

Who — besides weirdo civil rights types — would defend the right to tell kids that there is no Santa Claus? (Recent presidential comments aside). I doubt if Facebook would. I once represented neo-Nazis who were denied a parade permit by Boston’s mayor. The judge who ruled for the Nazis noted that “if there is a bedrock principle underlying the First Amendment, it is that the government may not prohibit the expression of an idea simply because society finds the idea itself offensive or disagreeable” [Nationalist Movement v. City of Boston (1998)].

Neo-Nazi rally in Trenton, New Jersey, 2011. Photo credit: Bob Jagendorf Flickr (CC BY-NC 2.0)

Could we expect the world’s largest corporations to apply this same “bedrock” principle to online content? After all, why should they? Their duty is not to their customers and certainly not to First Amendment principles — but to their shareholders.

We saw how Craigslist, Facebook, and Microsoft reacted to SESTA/FOSTA by pulling the plug entirely on any content that could possibly be offensive. And lots more that certainly wasn’t. There is no relationship between the body of First Amendment law — crafted to protect even offensive speech — and anything as soporific as Facebook’s Community Standards for what it allows to be posted and what its screeners and AI algorithms reject.

With most people’s speech being online now (When is the last time you saw somebody standing on a soapbox in a public square shouting out criticism of the government?), we’ve outsourced decisions about what gets spoken and what gets squelched to private corporations. Setting their own standards. For their own purposes. With no relationship to anybody’s legal rights except the corporations.

What a sorry state we’re in. It’s frustrating for free-speech traditionalists to be advocating to protect the smallest proportion of public speech while being excluded from most of society’s discussions on politics, culture, and thought.

We need to recognize that mimicking the German model for removing online hate speech would mean abandoning the fundamental American tolerance for offensive, unpopular speech. It hurts to say it but, when it comes to speech in America, the National Rifle Association gets it right, better than Facebook does: The only defense against a bad guy’s hate speech is a good guy’s counter-speech.

With all the hoopla over how Facebook and Twitter restrict offensive speech, there has been no discussion of whether they should be allowed to do so in the first place. That conversation needs to take place.

Harvey A. Schwartz is a retired Boston civil rights attorney. He has argued two civil rights cases before the United States Supreme Court. His novel Never Again was published in November. It describes a frightening scenario in which something similar to the Holocaust happens in the near future in the United States.

Related front page panorama photo credit: Adapted by WhoWhatWhy from Grimace (Greg Borenstein / Flickr – CC BY-NC-SA 2.0).